I generated 352 AI mascots. The right one wasn't among them.

I'm building a SaaS called Morphow. Modular white-label webapps for businesses: onboarding, training, quizzes, surveys. The kind of product that needs a strong identity. Not a Canva logo. A mascot. A character that can live inside the interface, react to user actions, embody the brand.

At first, I didn't have much to go on. I was picturing Ditto the Pokemon, a Barbapapa (I promise this isn't childhood nostalgia talking...), some white floating thing. That's it. The rest came through iteration.

I decided to generate it myself. With my own tool.

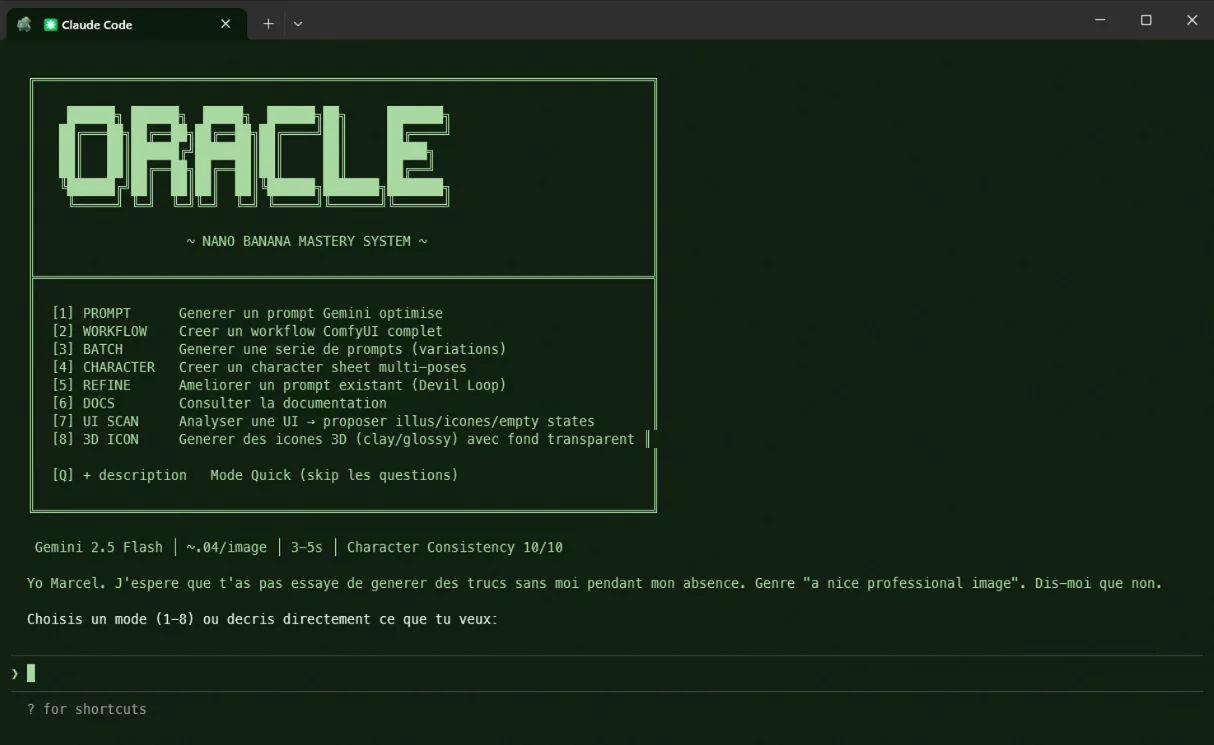

The tool: /prompt-oracle

Before the mascot, I need to talk about the tool that made it. It's not Midjourney. It's not DALL-E. It's an agent I built myself.

/prompt-oracle is a Claude Code skill that orchestrates ComfyUI locally with Gemini 2.5 Flash as the generation model. The agent writes prompts, configures workflows, runs generations, and organizes outputs. It has 8 modes: simple prompt, full ComfyUI workflow, batch variations, multi-pose character sheets, iterative refinement, and 3D icon generation.

The key part: ComfyUI runs locally on my machine, but Gemini 2.5 Flash is still a cloud API - the difference is price: ~$0.04 per image instead of $0.50 on Midjourney. I can generate 4 variations in 15 seconds and iterate 352 times without thinking about budget.

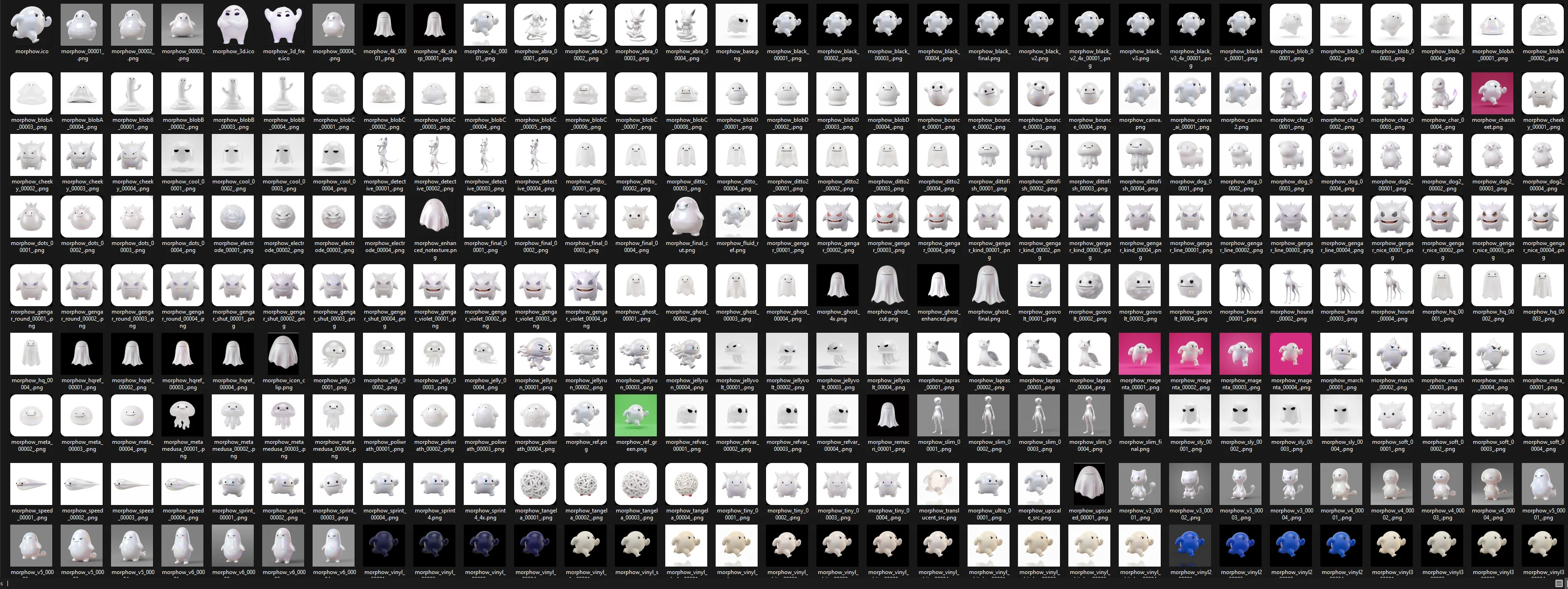

And that's exactly what I did.

Phase 1: the Pokemon starting point

Morphow's mascot is Morphow. The character and the brand are one and the same. Morphow = Morph On Web. And the nod to Ditto (Metamorph in French), the Pokemon that transforms into anything, isn't a coincidence. That's exactly what the product does: webapps that take the shape of the client's need.

My first prompts started from there. A white Ditto, in 3D, clay/vinyl style.

The result was cute. But pink. And too Pokemon. You could immediately see the reference. If someone at Nintendo stumbled on it, it screamed cease & desist.

I needed to move away from the source.

Phase 2: the dead ends

Here's where it gets interesting. Tell an AI "move away from Ditto but keep the spirit" and it goes everywhere.

Gengar direction: a white monster with red eyes and teeth. Aggressive, intimidating. Humanoid direction: a thin white alien, standing on two legs. Too anthropomorphic. Classic ghost: clean, smooth, completely generic. Jellyfish: iridescent tentacles, interesting but too aquatic.

And a dozen other directions. Each batch of 4 images took 15 seconds. In one evening, I had 50 different directions.

The problem: each direction had something good. But none had everything. The ghost had the right shape but no personality. The Gengar had character but was scary. The blob was original but unreadable at small sizes.

Phase 3: the breakthrough

Around iteration ~80, I generated something that made me stop.

A white Pac-Man ghost. Half-closed, angular eyes. No mouth. Floating. Looking at you with this relaxed superiority. Like it knows something you don't.

That was the character. Not the exact design, but the attitude. Angular black eyes on white iridescent surface, no mouth. Expression through eyebrows. Minimal, recognizable, versatile.

From that point on, I narrowed down. No more divergence. Only convergence.

Phase 4: convergence (and frustration)

The next 120 iterations were surgery. Adjusting proportions. Testing poses (standing, walking, sitting). Varying material (vinyl, clay, glossy, matte). Fine-tuning the eyebrows, eye tilt, head-to-body ratio.

And that's when I hit the wall.

AI generates images. Not characters. Every generation is unique. Proportions shift 2-3% between images. Eyes are never in exactly the same spot. One arm is slightly bigger than the other. The silhouette subtly changes from one batch to the next.

For a single image, it's imperceptible. For a character sheet (front, side, 3/4, back), it's a nightmare. You never get the same character from multiple angles. And for expressions (happy, sad, angry, neutral), it's worse: each emotion produces a slightly different character.

352 iterations. Dozens of "almost there". And always that 5% missing.

The pivot: from 3D to flat

I gave up at 3am. But not mentally. In bed, I opened Gemini on my phone and started talking about the project. No image prompts. Just a conversation. What I wanted, what I couldn't get, why 3D was a dead end.

Gemini brought up the Intel strategy: simplify radically. A logo that works at 16px and at 4K. No reflections, no textures, no 3D. Flat. Vector. And it was right: my 3D iterations looked great at full size but were unreadable at small sizes. A favicon, a notification badge, a chat avatar: all of these need a character that's legible in a few pixels.

We decided: flat it is. And then Gemini proposed a version based on my 3D iterations. Same proportions, same angular eyes, same missing mouth. But flat, with a thin outline and a white iridescent fill.

Bingo. I broke the middle strokes on the body, kept the angular eyes, and the character was there. Not in 3D. Flat. Legible, versatile, and most importantly: reproducible.

The illustrator

I called Remi.

Remi Rohart, illustrator. But let me be clear: I didn't show up empty-handed asking "design me a mascot." I had a character that was 95% there. The shape, the attitude, the proportions, the material, the angular eyes, the missing mouth, the iridescence. 352 iterations to get there. What I didn't have was execution rigor: the same character from every angle, consistent expressions, and a usable vector file.

I sent him my best AI outputs as a visual brief. Not a 40-page spec. The images. "This is the character. I want exactly this, but clean. Multi-angle views, expressions for app states, and a do & don't guide."

He worked in Illustrator. In 48 hours, he had:

- The character vectorized from 6 angles (front, left profile, 3/4, right profile, back, app icon)

- Exact proportions with construction guides

- 5 application expressions: determined (default), empty state, error, success, loading

- A "Do & Don't" guide: no clothing, no mouth, expression only through eyes and eyebrows, can hold objects but preferably avoid

- Allowed transformations (the character can morph into cubes, boxes, geometric shapes)

- The exact color palette (#2B0545, #F1E5FF) with defined radial gradient center

Clean Figma delivery. Every element ready to use in the interface.

What Remi delivered in 48 hours, AI could never have done in 2,000 iterations: consistency. The same character, exactly the same, from every angle and in every emotion. Fixed proportions. Construction guides so anyone can reproduce it. A system, not an image.

But that delivery also solved a problem most "I made everything with AI" articles prefer to ignore.

The intellectual property gray zone

As the law stands, an AI-generated image can't be copyrighted. Not in France, not in the US. The US Copyright Office said it clearly in 2023: no human author, no copyright. In Europe, same deal - copyright protects original works from human intellectual creation. A prompt, however elaborate, doesn't count as a sufficient act of creation.

In practical terms: my 352 ComfyUI images don't belong to me legally. Anyone could copy the same aesthetic, the same character, and I'd have zero recourse.

That's a problem when you're building a brand. Your mascot is your identity. If it can't be protected, it's worth nothing legally. And I hit that wall firsthand: I tried to register the character as a figurative trademark. Couldn't. No human author, no registration.

Let me be honest: I'm the first person to argue that you should pay illustrators properly. But I've been working for free for months. Zero revenue, pure investment. Paying an illustrator for a full character sheet was beyond what I could afford. But doing nothing was worse. So I found a middle ground: have an illustrator work three days instead of three weeks. Because the brief was already done. 352 iterations means 352 visual decisions. Remi didn't have to find the character. It was there. He just had to build it properly.

Remi took my AI outputs as a visual base and entirely rebuilt the character in Illustrator as clean vector art. His work is an original creation, protected by copyright from the moment it's made. The vector files, the character sheet, the expressions: all of it is legally mine (with rights transfer). I can register the mascot as a figurative trademark. I can take legal action if someone copies it.

AI generated the inspiration. The human generated the intellectual property.

This isn't a detail. For a SaaS that wants to raise funding, get acquired, or simply protect its identity, the question "does your mascot actually belong to you?" has a binary answer. And if the answer relies solely on AI outputs, it's no.

What I learned

AI is an explorer, not a craftsman. In 352 iterations, I covered a possibility space that a human brainstorm would have taken weeks to traverse. I tested directions I never would have considered (the jellyfish, the detective dog, the formless blob). Some were terrible. Others surprised me. And it's from that exploration that the final brief emerged.

But exploration isn't enough. At some point, you need to freeze. Decide that THIS character, with THESE proportions, THESE eyes, at THIS angle, is the one. And reproduce it identically 50 times. AI can't do that. Not yet.

And even if it could, the result wouldn't belong to you.

The real workflow isn't AI vs human. It's AI then human. AI opens the space. The human closes it. And the human signs.

And having built my own generation tool made the exploration 10x faster and 100x cheaper than any cloud service. 352 iterations at ~$0.04 each, that's ~$14. The result was a visual brief so precise that the illustrator delivered in 48 hours with zero back-and-forth.

~$14 of AI and a good illustrator. That's how you design a mascot in 2026.